(“Joint Statement”). The Joint Statement is aimed at services likely to be accessed by children that fall within the scope of the Online Safety Act 2023 (“OSA”) and UK data protection legislation, and is designed to help providers comply with both their online safety and data protection obligations when deploying age assurance.

The Joint Statement arrives alongside a broader push from both regulators—including Ofcom’s recent call to action directed at major tech firms, an open letter from the ICO urging platforms to strengthen their age checks, and several enforcement actions by both regulators.

Some key takeaways from the Joint Statement include:

1. The Joint Statement Emphasises a Shared, Risk-Based and Tech-Neutral Approach.

The Joint Statement emphasises several areas of alignment between Ofcom’s and the ICO’s approaches to age assurance:

- Self-declaration is not sufficient. The regulators agree that self-declaration alone—such as a tick-box age confirmation—is not an effective means to determine users’ ages or prevent underage access. Notably, the ICO goes further, stating that currently available profiling-based approaches are also not sufficiently effective.

- Anti-circumvention. Age assurance methods must address risks of circumvention that could undermine the accuracy and robustness of the process.

- Data protection compliance is required. Both regulators acknowledge that all age assurance methods involve the processing of personal data, and confirm that such processing is allowed provided the method chosen is necessary, proportionate to the risks, and compliant with data protection legislation.

- Flexible, tech-neutral, feasible solutions. Both regulators adopt a technology-neutral approach, giving services flexibility to select methods appropriate to their context—including size, user base, and available resources—provided those methods meet the applicable legal requirements. The regulators underscore that they do not expect services to deploy age assurance methods that are not technically feasible or that introduce risks to rights and freedoms outweighing the benefits.

2. Under the OSA, Certain Services Must Use “Highly Effective” Age Assurance.

The Joint Statement highlights the OSA’s requirements for “highly effective age assurance” applicable to: (1) User-to-user services that are likely to be accessed by children and that allow “primary priority content” (including pornography, self-harm, suicide, and eating disorder content), regulated under Part 3 of the OSA; and (2) Part 5 services that publish their own pornographic content.

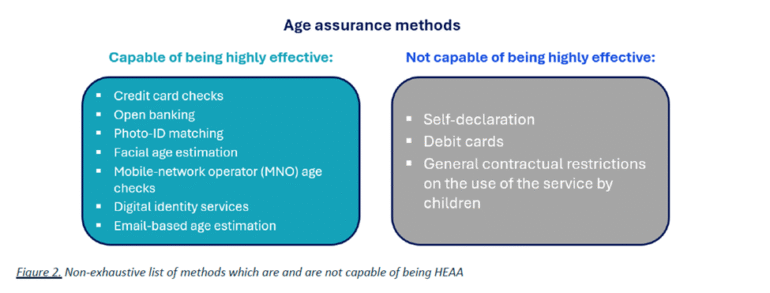

Per Ofcom’s guidance (see here and here), an age assurance method must be technically accurate, robust, reliable, and fair to be considered highly effective—and must also be easy to use and work for all users. The Joint Statement provides the following summary of age assurance methods and their ability to be considered “highly effective”:

Source: Joint Statement, page 5.

The Joint Statement highlights that the OSA does not require services to set a minimum age. However, services that choose to do so must state the minimum age in their terms of service and apply it consistently. Services that do not use highly effective age assurance to enforce their minimum age must assume that underage children are using the service and must reflect this in their children’s risk assessments and corresponding mitigations.

3. The ICO Emphasises that Age Assurance Can Help Protect Children’s Personal Information.

From a data protection perspective, the ICO’s position is that age assurance can help organizations avoid unlawful processing of children’s personal information by preventing children from accessing services not suitable for them, as well as implement age-appropriate protections in accordance with the ICO’s Children’s Code.

Preventing underage access. Where a service is not suitable for children under a certain age—for instance, where a minimum age of 13 is stated in the terms of service—the ICO explains that the service will generally lack a lawful basis for processing the personal data of children below that threshold. The Joint Statement identifies the implementation of an effective “age gate” as the best way to minimise the risk of unlawful processing. While the ICO does not mandate specific technologies, it points to facial age estimation, digital ID, and one-time photo matching as current viable examples for services enforcing a minimum age requirement.

Children’s Code protections. Where a service is suitable for children (or children above a certain age), the ICO maintains that the organisation’s focus should be on ensuring an age-appropriate experience consistent with the ICO’s Children’s Code. In these cases, services should use age assurance methods that are proportionate to the risks of the platform and provide sufficient confidence to apply the Children’s Code standards according to the user’s age. The ICO’s Age Assurance Opinion further explains how services can use age assurance in a risk-based, proportionate way that complies with data protection law. In the Joint Statement, the ICO reiterates that services that lack reliable age information must apply the Children’s Code standards to all users as a default baseline of protection (in a way that still reflects whatever age information is available to the organization).

Regardless of the specific age assurance method adopted, the Joint Statement underscores that services must comply with the full set of UK GDPR data protection principles. In practice, this means that organizations will need to take measures such as establishing a lawful basis (and obtaining parental consent if required), ensuring fairness and transparency (including through age-appropriate transparency notices), and demonstrating accountability (including through Data Protection Impact Assessments (“DPIAs”). On DPIAs, the ICO has published detailed guidance on DPIAs in the context of the Children’s Code, including example DPIAs (available here). In the Joint Statement, the ICO also highlights the availability of the Age Check Certification Scheme (“ACCS”) to help services identify age assurance providers that meet UK data protection standards.

4. Two Practical Examples Illustrate How the UK’s Safety and Data Protection Regimes Interact.

The Joint Statement includes two hypothetical compliance scenarios—one for a user-to-user pornography service and one for a large social media platform. The examples illustrate how a service can implement age assurance that meets both online safety and data protection requirements by setting out the hypothetical approaches to compliance in a side-by-side, two column chart.

In the first example, a user-to-user pornography service uses highly effective age assurance to prevent access to pornographic content, ensures that no such content is accessible before the age check is completed, and takes steps to reduce circumvention risk. From a data protection perspective, the example states that the service relies on legal obligation as its lawful basis for the age assurance processing, applies the data protection principles, provides clear privacy information, offers mechanisms to challenge inaccurate age decisions, and reviews its DPIA as risks evolve.

In the second example, a large social media service with a minimum age of 13 uses highly effective age assurance to restrict access to a “Not Safe For Work” section (which includes pornography) and, separately, uses robust age assurance at the account-creation stage to identify and block users under 13. The example also emphasizes accountability measures, including conducting a DPIA, applying the data protection principles to the age assurance process, and continuing to assess whether the chosen approach remains fit for purpose.

* * *

The Joint Statement arrives at a moment of intensifying regulatory focus on age assurance, both in the UK and across Europe. Since the beginning of the year, both UK and European regulators have announced several enforcement actions and investigations under way touching on age assurance. The Joint Statement complements Ofcom’s call earlier this month for major platforms to report by April 30, 2026 on the steps they are taking across four priority areas—minimum age policies, anti-grooming controls, safer algorithmic feeds, and risk assessments of new AI features before deployment. Separately, the UK Government’s consultation on children’s online experiences remains open until May 26, 2026 and will likely feed into the further development of additional rules in this area (as described in our previous blog post here). At the EU level, the European Commission has published guidelines on the protection of minors under the Digital Services Act (as described here) and has released a blueprint for a privacy-preserving age verification solution—interoperable with the forthcoming EU Digital Identity Wallets—that is currently being piloted in several Member States. As these cross-jurisdictional pieces of the privacy-protective age-assurance “puzzle” come together, organisations will have several sources of information to consider as they design and adopt their own solutions.